|

That alone, he explained, was enough to allow the red team to construct its own ML model to test its attack offline.

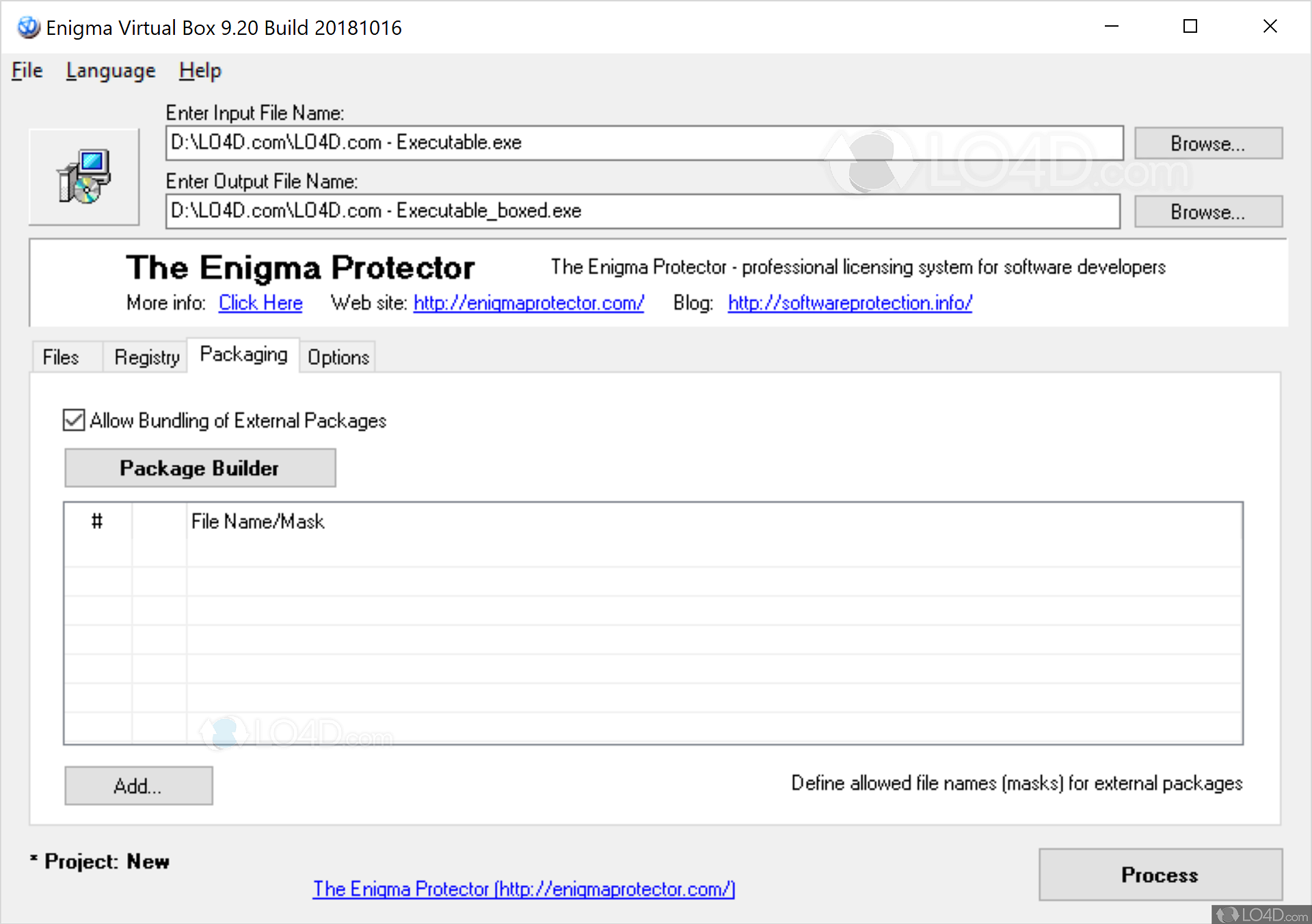

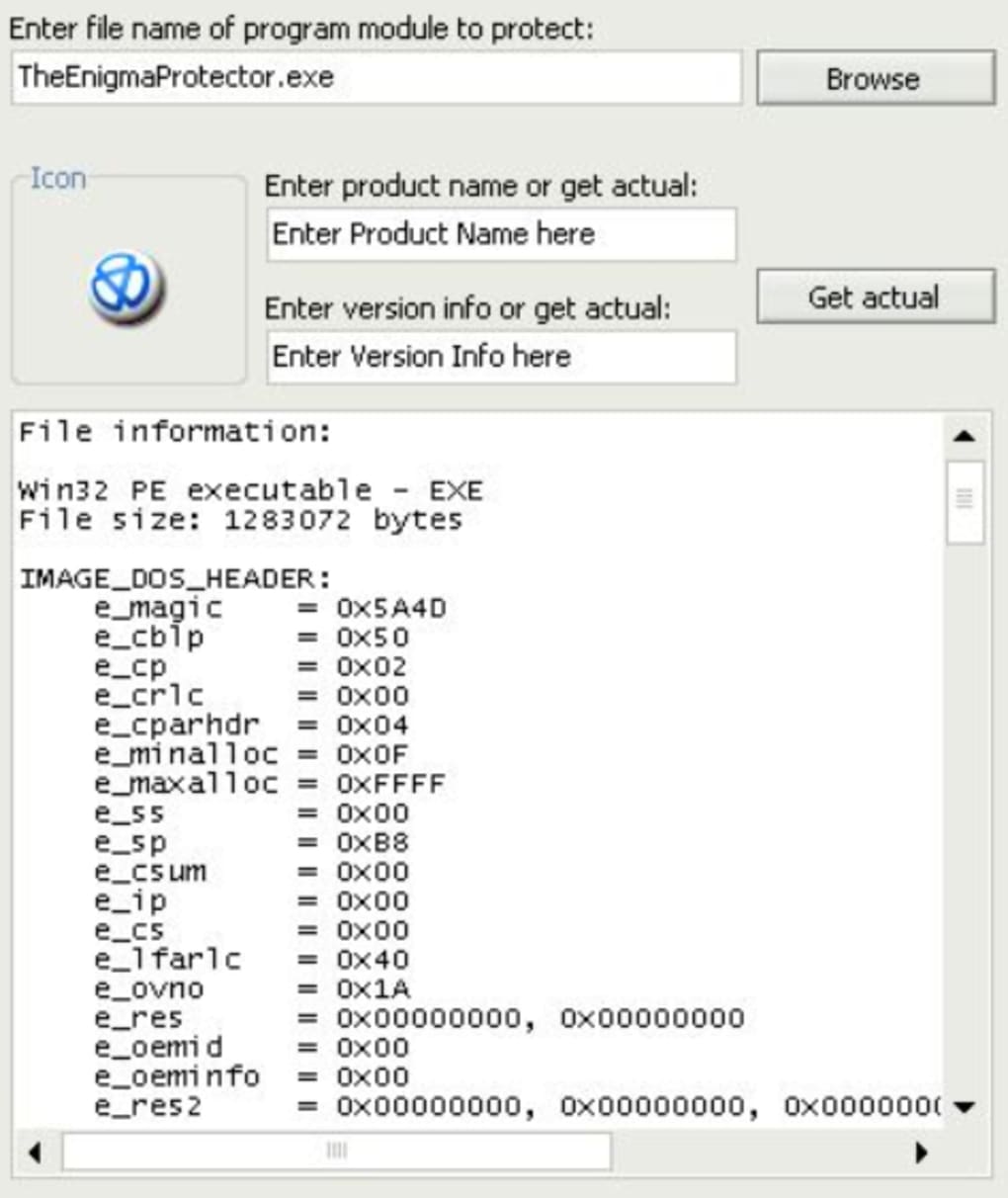

First was that the team had read-only access to training data for the model and second was that the team found details about how the model handled data featurization – the conversion of data into numerical vectors. The savings for doing so at a company with over 160,000 employees can be significant, said Anderson.ĭuring the reconnaissance phase of the exercise, the red team found that its credentials gave it access to two critical pieces of information, Anderson recounted. Microsoft relies on a web portal to allocate physical server space for virtualized computing resources.

A red team exercise refers to an internal team playing the role of an attacking entity to test the target organization's defenses.Īnderson recounted one such red team inquiry conducted against an internal Azure resource provisioning service that uses ML to dole out virtual machines to Microsoft employees. Nowadays, having learned to take ML security seriously, Microsoft conducts red team exercises against its ML models. Within 24 hours, Tay was parroting toxic input from online trolls and was subsequently deactivated. Launched in 2016 as interactive entertainment, Tay had been programmed to learn language from user input.

The fate of Microsoft's Tay twitter chatbot illustrates why ML security should be seen as a practical matter rather than an academic exercise. In other words, securing ML models is a necessary step to defend against more commonplace risks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed